Reading Club #1: Visualization Literacy Tests

Reporting on the first FILWD reading club on visualization literacy tests

Last week, I met online with a group of FILWD readers for our first reading club on data visualization literacy. We were a total of ten people with a very diverse background, expertise, and geographical location. It was awesome! The goal of this post is to tell you more about the reading club idea, provide a report of our first discussion, and giving you information about future reading clubs. Let’s go!

Background

A couple of weeks ago, I posted a message on LinkedIn sharing a collection of papers on the development of tests to measure visualization literacy. The post sparked a few conversations, and at some point, I had a light bulb idea: what if I organize an online reading club? And that’s what I did. I selected a subset of about 200 FILWD readers according to their engagement level with the newsletter (a nice feature Substack provides to authors) and invited them to the reading club. About 45 people expressed an interest, about 15 signed up for the slot I had chosen, and about 10 participated in the meeting (interesting funnel, eh?).

The papers

For this first reading club, I proposed three papers with which I was familiar. All three focus on the development of visualization literacy tests to measure the level of literacy test takers have. As you can imagine, this is a complex task for many reasons. First, one has to define visualization literacy in measurable terms. Second, one has to develop test material that actually measures what one intends to measure. Third, one has to assess the validity of the test through statistical procedures. The three papers look at these problems from different angles and use different approaches.

“A Principled Way of Assessing Visualization Literacy.”

Boy, Jeremy, Ronald A. Rensink, Enrico Bertini, and Jean-Daniel Fekete. 2014. “A Principled Way of Assessing Visualization Literacy.” IEEE Transactions on Visualization and Computer Graphics 20 (12): 1963–72.

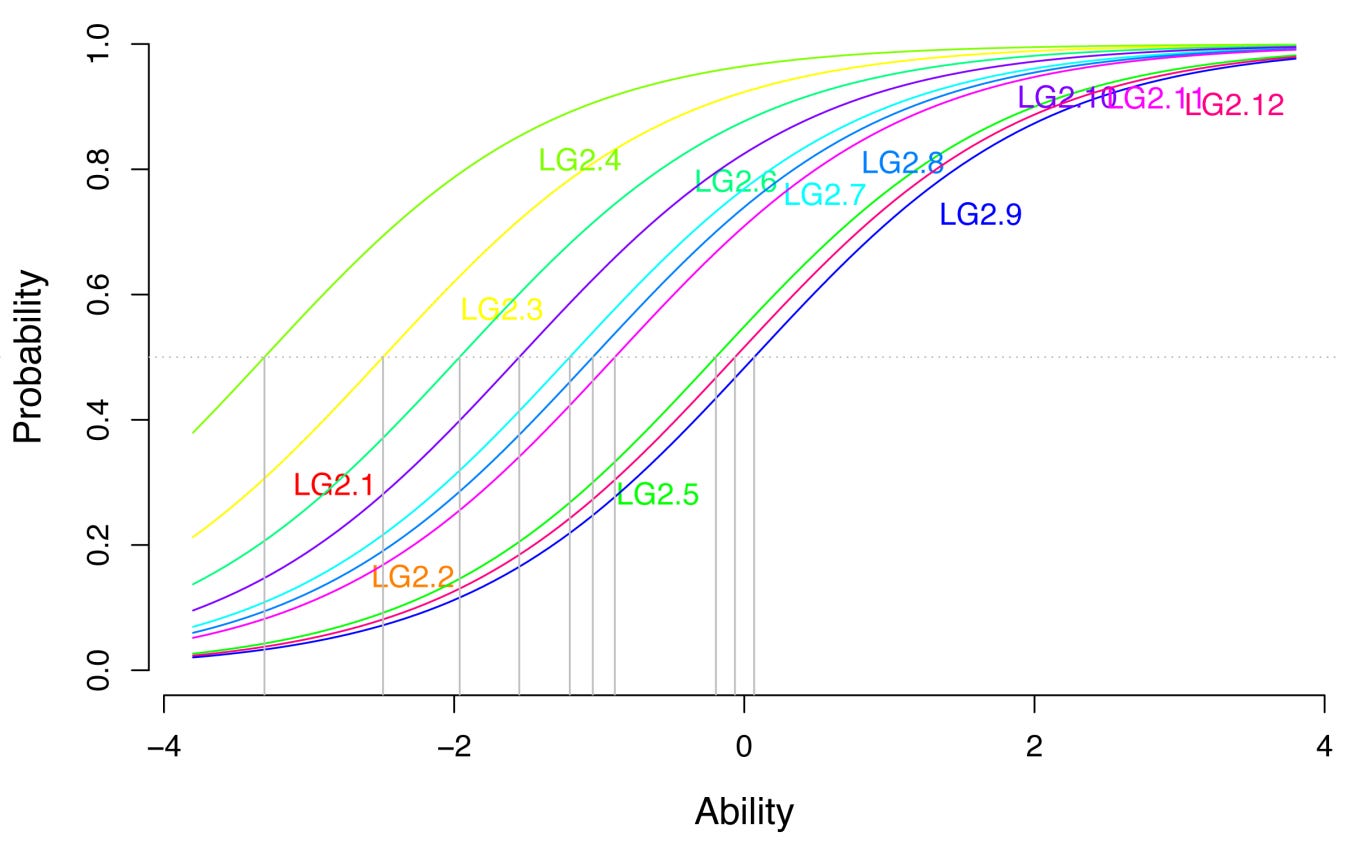

This paper (in which I am a co-author) introduces the concept of data visualization literacy and develops a first formal test to assess it. The test includes only three simple visualization types: line charts, bar charts, and scatter plots. It also develops a set of predefined tasks/questions to ask the test takers, which comprise: “Maximum (T1), Minimum (T2), Variation (T3), Intersection (T4), Average (T5), and Comparison (T6).” As explained in the paper: “All are standard benchmark tasks in InfoVis. T1 and T2 consist in finding the maximum and minimum data points in the graph, respectively. T3 consists in detecting a trend, similarities, or discrepancies in the data. T4 consists in finding the point at which the graph intersected with a given value. T5 consists in estimating an average value. Finally, T6 consists in comparing different values or trends.” The paper also introduces the use of Item Response Theory for the development of literacy tests for data visualization.

The theory helps create statistical models of the difficulty and discriminability of each test item, which is useful to 1) decide which questions should be included/excluded in the test, 2) assess the overall validity of the test, 3) create a calibrated test score for test takers that takes into account the difficulty of each test item.

“VLAT: Development of a Visualization Literacy Assessment Test.”

Lee, Sukwon, Sung-Hee Kim, and Bum Chul Kwon. 2017. “VLAT: Development of a Visualization Literacy Assessment Test.” IEEE Transactions on Visualization and Computer Graphics 23 (1): 551–60.

This paper appeared a few years later. It builds upon and considerably expands the work done in Boy et al.’s paper. The main innovation is the inclusion of a much wider spectrum of data visualization types and tasks (the figure below shows the visualization types the test includes). The authors also used a different procedure to design and evaluate the test, which is way simpler than Item Response Theory and, in a way, more intelligible.

Another great aspect of this test is that it is readily available online. In fact, if you want to take the test right now, you only need to follow this link, answer the questions, and get your results. As a side note, I don’t know why we did not make it easier to take the test we developed in our paper. In retrospect, it seems like a no-brainer. Now that I am back to looking at visualization literacy, I plan to reboot our project and make the test easier to access.

“CALVI: Critical Thinking Assessment for Literacy in Visualizations.”

Ge, Lily W., Yuan Cui, and Matthew Kay. 2023. “CALVI: Critical Thinking Assessment for Literacy in Visualizations.” In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, 1–18. CHI ’23 815. New York, NY, USA: Association for Computing Machinery.

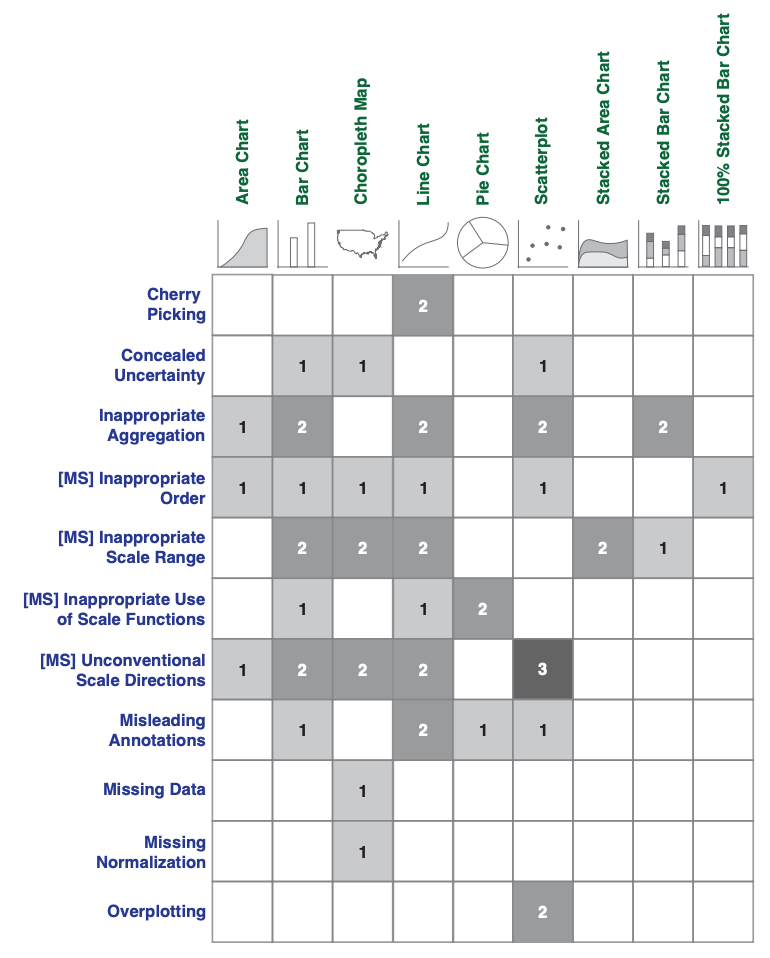

This paper is the most recent and has a very different focus. Instead of measuring the ability to read and interpret well-formed visualizations, it aims at measuring people’s ability to spot misleading visualizations. The authors introduce the idea of “misleaders” and use them to create a whole test for data visualization critical thinking. They collected a series of candidate misleading effects from existing papers and a series of visualization types starting from the VLAT. Then, they combine these two sets to form a set of candidate items for the test. In the figure below, you can see the combination of misleaders (in rows) and visualization types (in columns).

One thing I like about their construction process is the realism and diversity criteria they used to create the test items. Realism refers to how likely it is for a person to encounter that item in reality, and diversity refers to having visualizations that are sufficiently different from one another.

These three papers form a nice combination because they provide examples of fundamental design decisions and components of a visualization literacy test. It’s worth mentioning that other tests have been developed earlier, though with less of a specific focus on visualization. Other tests have been developed more recently also, but these three are a very good way to get started if one wants to familiarize oneself with the problem of designing a visualization literacy test.

The reading club meeting

The first meeting went incredibly well. We had people from many different backgrounds and geographical locations. We had a quick round of introductions and then dived into each paper, discussing 1) what we found exciting and insightful and 2) what challenges and limitations we identified.

What surprised me about this meeting was how engaged everyone was. Each participant had some interesting observations, and I felt I learned a lot by listening to everyone. This is a testament to the fact that one does not need to be an “academic” to have critical and thoughtful observations on research ideas expressed through papers.

These are some of the most interesting observations from the meeting.

What do these tests measure? One issue with these tests is to figure out what skills they measure. Each test has a different take on what it means for a person to be literate in visualization, and it is not clear exactly how they compare and to what extent they measure the thing we want to capture.

Correlation with other skills? Similarly, we do not yet know how these tests correlate with other skills. What makes a person perform well in such tests? What basic skills do they have? Are some of these skills innate or acquired? To what extent can one improve with specific interventions?

Effect of domain expertise? The tests are designed to be agnostic to specific domains. But in practice, many people consume data about domains they are at least familiar with. Do the tests depend on domain expertise? Are people more capable of reading graphs correctly if they are more familiar with the data and the domain problem?

Cultural aspect of vis literacy. Another interesting aspect is how robust the tests are regarding cultural variations. The most obvious example is cultures that read from right to left. Can these tests adapt to conventions used in different cultures? Do we have enough information regarding which adaptations are required to make these tests available to people in many different regions of the world? It would also be great to translate these tests into multiple languages to test them with a much more diverse set of participants.

Using these tests in practice. One problem with the existing tests is that they are still hard to use in practice, partly because of some of the problems mentioned above and also because the work done is still too preliminary. However, one participant reported using the test with a group of students in class and finding that students struggled to provide correct answers for the CALVI test.

Using tests to take action. Another big issue with the current tests is that it’s unclear how they can help identify specific knowledge gaps and how they can direct learners to specific interventions to cover the identified gaps. Ultimately, the goal should be to help people learn the skills we wish to promote. How to link tests to interventions and what interventions to use are not clear yet, and it is certainly a space for future developments.

Can tests be used for adaptive visualizations? Another useful observation is whether the tests can be used to create adaptive visualization projects. If we have reliable ways to test and monitor the user’s skills in using data visualizations, can we adapt the visualizations to match these skills, and would that be beneficial?

How about testing visualization design skills? These tests we covered measure the ability to read visualizations and extract information from them. But what if we want to test people’s ability to use data visualizations correctly? To the best of my knowledge, such tests do not exist, but they would be an excellent complement to the tests developed so far. If such tests existed, it would be possible to test the effect of data visualization courses like mine and compare the effect of different approaches. What solutions work best? How can we best teach people to use data visualization?

That’s all for now. I want to thank the reading club participants for the many interesting observations and the lively discussions. I look forward to organizing more reading clubs in the future. If you want to participate, write a comment below, and I will add you to the list.

Thank you for sharing this summary.

I agree that visualization literacy itself is also linked to other forms of skills like language literacy and numeracy. And these skills already vary a lot across cultures. I'm glad to see on-going discussions about cultural variations of vis literacy tests and can't wait to read more thoughts on what it takes to translate and adapt the existing tests across cultures.

I'd love to be added to the reading club. Thanks!

Please add me to the list for the reading club :).